Building AI Interfaces: Why We Went All-In on the TanStack Ecosystem

Rendering streaming LLM responses and complex agent workflows breaks standard React patterns. Here's why the entire TanStack ecosystem was the right choice.

Rendering streaming LLM responses and complex agent workflows breaks standard React patterns. Here's why the entire TanStack ecosystem was the right choice.

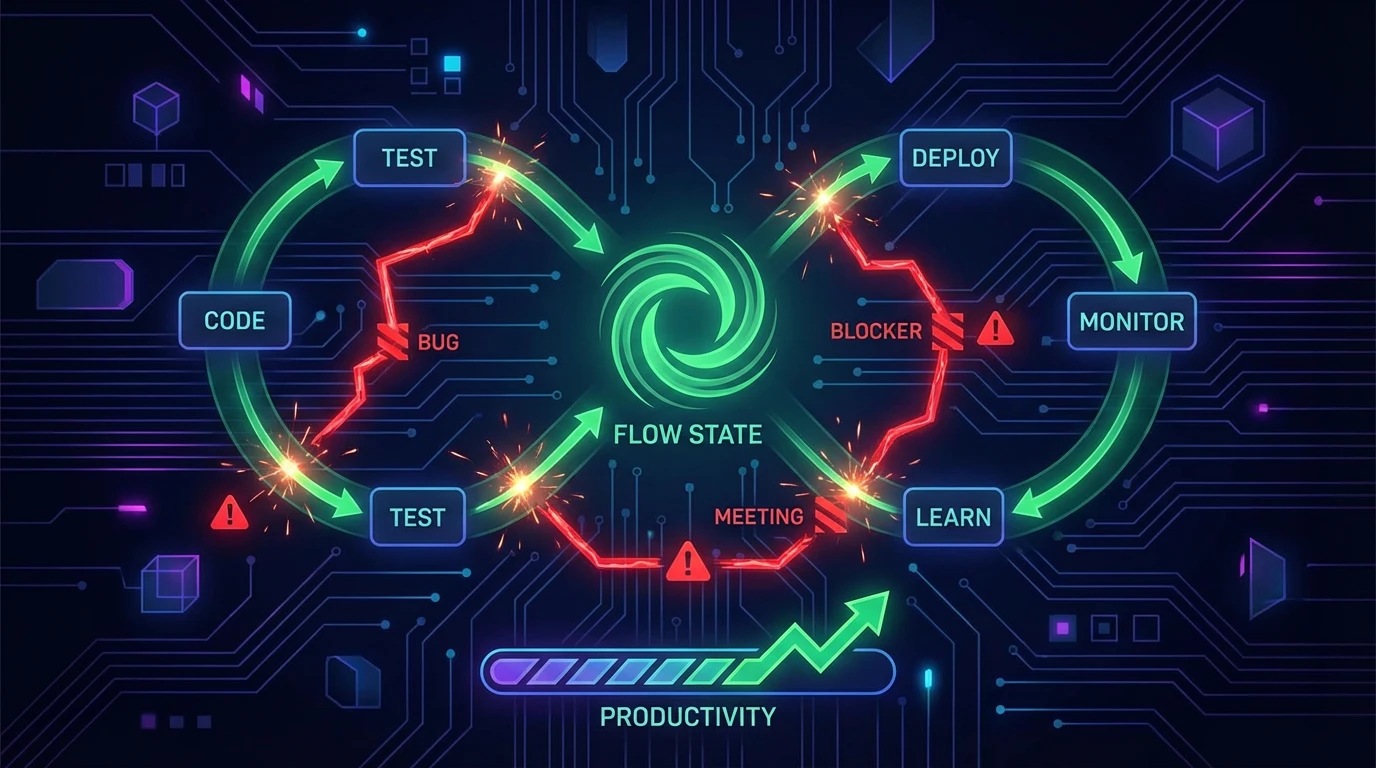

Building UIs for AI applications is fundamentally different from building traditional CRUD apps.

In a CRUD app, state is binary: loading or loaded. Data is static until the user mutates it. In an AI app, state is a river. LLM responses stream in token by token. Agent workflows execute asynchronously, emitting status events, tool calls, and human-in-the-loop approval requests over websockets.

Standard React patterns collapse under this weight. useEffect tangles. Redux requires too much boilerplate for high-frequency updates. Context API causes massive re-render cascades.

When building the frontend for my project, I needed an architecture that could handle extreme reactivity without sacrificing performance. I went all-in on the TanStack ecosystem.

My apps/web directory uses:

It's rare that I adopt an entire ecosystem from one creator. But in this case, the pieces interlock perfectly.

The hardest part of an AI chat interface isn't the chat bubbles. It's the execution stream.

When a user asks an agent to do something, the backend emits Server-Sent Events (SSE):

{"type": "node_start", "node": "fetch_data"}

{"type": "tool_call", "tool": "search_db", "status": "running"}

{"type": "tool_result", "data": "..."}

{"type": "text_delta", "content": "Here is"}

{"type": "text_delta", "content": " what I found"}

If you put this stream into a React state variable (setMessages), every token triggers a re-render of the entire chat history. At 50 tokens per second, the browser freezes.

TanStack Store is framework-agnostic and incredibly fast. It allows for fine-grained reactivity.

Instead of React state, the stream updates a Store. The UI components subscribe only to the specific message or execution step they care about.

// The store handles the high-frequency mutations

export const streamStore = new Store({ messages: [], activeExecution: null })

// The component only re-renders when its specific message changes

const message = useStore(streamStore, (state) => state.messages[id])

The tokens stream in, the store updates, and only the active text bubble re-renders. The rest of the DOM stays quiet.

Agent configuration is complex. A workflow node might have 20 settings, dependent fields, and dynamic tool parameters.

Standard form libraries struggle with deeply nested, dynamic arrays (like configuring a list of fallback models or conditional routing rules).

TanStack Form treats form state like a database. It handles deep object updates without re-rendering the entire form. When I change the temperature slider on a model configuration, only that slider re-renders.

This is critical when building node configuration panels inside a React Flow canvas. A re-render of a massive form inside a canvas node destroys performance.

When a user approves a human-in-the-loop request (see Human-in-the-Loop Done Right), the UI needs to update instantly, even if the backend takes a second to process the continuation.

TanStack Query makes this trivial.

const mutation = useMutation({

mutationFn: approveGate,

onMutate: async (gateId) => {

// Cancel incoming refetches

await queryClient.cancelQueries({ queryKey: ['executions'] })

// Optimistically update the UI to show "Approved"

queryClient.setQueryData(['executions'], old => updateOptimistically(old, gateId))

}

})

The user clicks "Approve", the UI immediately reflects the decision, and the workflow continues. If the network fails, TanStack Query rolls it back.

With TanStack Start, routing is file-based and fully type-safe.

When navigating to a specific workflow execution trace, the loader fetches the data before the component renders.

export const Route = createFileRoute('/workspace/$workspaceId/executions/$executionId')({

loader: ({ params: { executionId } }) =>

queryClient.ensureQueryData(executionQueryOptions(executionId)),

})

Because it integrates perfectly with TanStack Query, the data is fetched on the server, dehydrated, sent to the client, and immediately available. No layout shift. No loading spinners popping in and out.

I've built many apps with Next.js. For a content site, it's fantastic.

But for a heavy client-side application like an agent orchestrator—where the value is in the canvas, the streaming chat, and the real-time execution graphs—the App Router's server-first mental model fights against what I'm trying to do.

TanStack Start gives me the best parts of SSR (fast initial load, SEO for public pages) but stays out of the way when I need to build a complex, highly interactive SPA.

This stack shines in a polyglot monorepo (see Polyglot Monorepos).

My Rust backend generates an OpenAPI spec. I generate a TypeScript client from that spec. I feed that client directly into TanStack Query options.

The types flow seamlessly from the Rust database models all the way into the React form fields. If I change a field name in Rust, the TypeScript build fails exactly where the form input is defined.

AI applications require a frontend architecture that treats real-time, asynchronous streams as first-class citizens. The TanStack ecosystem provides the primitives to build it without fighting the framework.